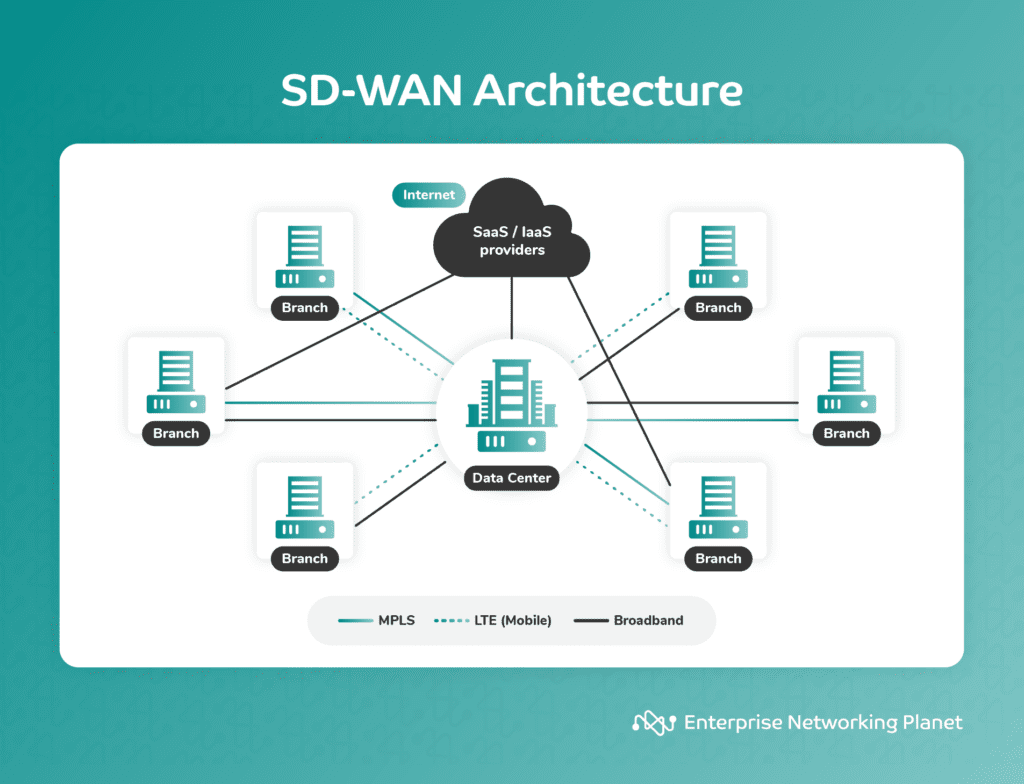

A software-defined wide area network (SD-WAN) is a networking technology that uses a software-based approach to manage and optimize the performance of a WAN. It enables enterprises to combine the capability of various transport services, including multiprotocol label switching (MPLS), long-term evolution (LTE), and broadband internet services, to connect users to applications securely.

As businesses grow, linking branch offices with headquarters in one larger network becomes necessary. However, traditional WAN technology has several limitations, especially regarding reliability and speed. SD-WAN addresses these issues, making it an increasingly popular WAN option.

Table of Contents

What problems does SD-WAN solve?

Knowing the challenges SD-WAN solves will help you understand how it can function in your organization. These solutions include improving network connection quality, reducing network downtime, and lowering infrastructure expenditures.

Quality of network connections

A standard WAN connection often sees high latency and packet loss, particularly as you move away from large metro areas with more plentiful bandwidth. Adding backup or secondary WAN links doesn’t help much when latency becomes an issue.

So how do you fix it?

SD-WAN can solve these problems by applying various techniques to relieve congestion on your network without requiring an overhaul of your existing infrastructure. For example, if latency issues affect your users on a particular link, SD-WAN could temporarily shift traffic to another link with less traffic. If one of your links goes down completely, SD-WAN could automatically reroute traffic through another link until repairs are made.

Network downtime

Network downtime is a period when your network system is inaccessible. There is both planned and unplanned network downtime. In this case, we are focused on the unplanned network downtime.

ITIC’s hourly cost of downtime survey revealed that 98% of respondents say a single hour of downtime costs over $100,000, and 81% of organizations indicated that the same period costs their business over $300,000.

SD-WAN prevents interruptions and outages by making application failover seamless and straightforward. SD-WAN offers automated failover capabilities, so traffic will immediately be routed through a secondary link (without human intervention) if your primary connection fails. This automation frees IT staff to focus on other projects rather than being tied up monitoring their networks 24/7.

High network infrastructure costs

Managing multiple hardware-based routers, firewalls, and other networking devices across various locations incurs high costs and requires specialized IT expertise. With SD-WAN, organizations can significantly reduce bandwidth costs, and since SD-WAN is software-based, there’s no need for expensive hardware.

How SD-WAN works

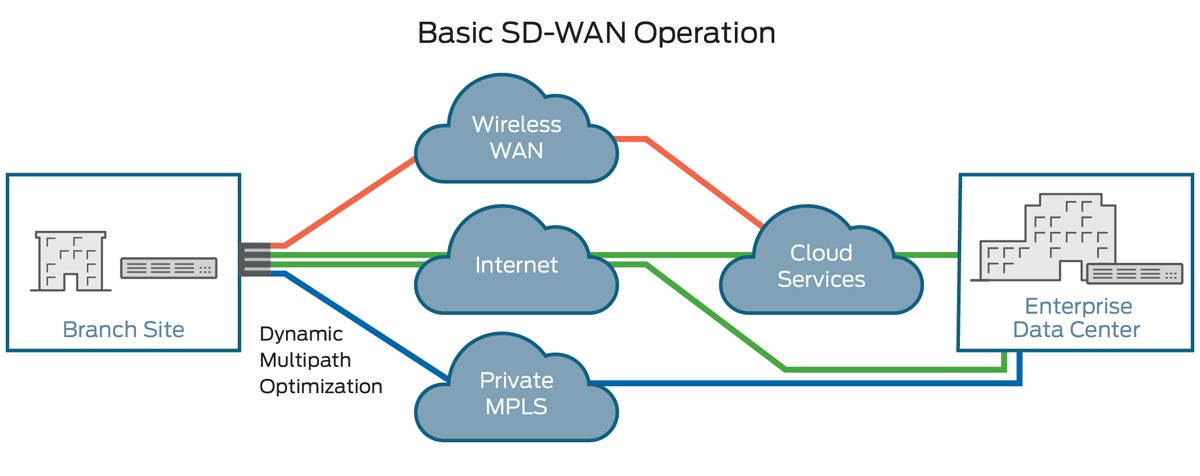

Traditional WAN services use Layer 2 and 3 virtual private networks (VPNs) to direct traffic to an internet gateway. SD-WAN uses centralized control to securely direct WAN traffic to SaaS and IaaS providers.

Unlike traditional routers that simply route packets from one location to another, SD-WAN uses a cloud service with intelligence built into it. The service monitors network conditions across all your branch sites to route traffic through optimal connections. The service will then dynamically route data between available networks.

This means that network failures or congestion can be handled quickly with minimal impact on your organization’s productivity.

SD-WAN intelligently routes network traffic based on policies and conditions defined by administrators. It can determine the best path for specific types of traffic, such as critical business applications or real-time communication, to ensure optimal performance and reliability.

SD-WAN leverages any combination of transport services — including MPLS, LTE, and broadband internet services — to dynamically select the most appropriate link for each application or traffic flow. This ensures efficient use of available bandwidth and improves the overall network performance.

SD-WAN vs. traditional WAN

Traditional WANs are expensive, inflexible, and difficult to manage. They require specialized skill sets for configuration, monitoring, troubleshooting, etc. These challenges are compounded when you have remote sites that need access to your corporate network.

And because traditional WAN solutions lack visibility into application performance, they’re not well suited for applications with strict quality of service (QoS) requirements.

By contrast, SD-WAN provides a more straightforward, cost-effective way to connect branch offices with headquarters. SD-WAN can improve security by offering built-in DDoS protection and end-to-end encryption for secure communications between sites.

Plus, it allows organizations to dynamically steer traffic based on application needs — ensuring that critical business data isn’t impacted by noncritical activity.

SD-WAN architecture

SD-WAN is a software layer that sits between an enterprise’s existing branch routers and its cloud provider, usually functioning as connective tissue between two disparate networks. The technology allows companies to connect multiple branches with various types of links or internet service providers (ISPs), creating a unified network no matter how many locations are involved.

To do so, it must automatically determine where data should be sent for optimal performance. This means that even if a user accesses their company’s VPN from home over their ISP connection, all of their traffic will be routed through whichever link provides optimal speed at any given time.

This makes it possible to create one cohesive virtualized network across all sites rather than managing each location separately.

5 features of SD-WAN

SD-WAN provides increased flexibility by letting you optimize various features and traffic flows, with or without IT intervention. At their core, SD-WAN solutions are built to work on your existing infrastructure while allowing you to scale as needed.

Support for multi-protocol label switching

Also known as MPLS, multi-protocol label switching provides greater control over a business’s WAN because it lets you change from one protocol to another based on what works best at any given time. For example, MPLS gives companies more freedom when setting up their WANs because they can easily adjust how they move data from one location to another, depending on current needs.

Self-optimization

Because SD-WAN lets you take advantage of real-time monitoring and analytics, there’s no need to hire additional staff members to keep an eye on things. That means that even if you don’t have dedicated IT support, there will still be people around who know how to use your network efficiently — because SD-WAN does all that heavy lifting for them.

Real-time traffic shaping

SD-WAN provides real-time traffic-shaping capabilities, allowing businesses to prioritize different kinds of data. In addition to prioritizing certain types of data, companies can block unwanted content, such as malware and phishing attacks, before reaching end users.

Visibility into applications

With complete visibility into applications within your organization, you’ll be able to see precisely where bottlenecks exist so that you can solve them quickly and easily without sacrificing performance. This insight benefits companies that rely heavily on bandwidth-heavy applications like videoconferencing, VoIP calls, and other cloud services.

Cloud connectivity

Businesses can save money and increase efficiency by connecting to several cloud platforms. If a company relies heavily on public clouds for backup purposes, having access to several providers makes it easier to ensure backups run smoothly.

Top 3 benefits of SD-WAN

SD-WAN helps businesses connect their locations remotely with more bandwidth, lower latency, and greater security than traditional networks that rely on hardware for processing power. Below are some additional benefits of SD-WAN.

Reduced OpEx

Moving to SD-WAN can significantly reduce your annual operating expenditure (OpEx) because you don’t need to invest in expensive hardware anymore — not to mention data center space, equipment maintenance, etc. Your software only requires a license fee and support, which is much cheaper than buying new networking equipment every few years.

Improved network performance

When using internet mode, if one link fails, all traffic goes down until another path is found. On the other hand, MPLS provides multi-path routing that enables automatic failover in case one link fails. So even if one link goes down due to failure, other links will still work, thus ensuring continuous connectivity.

Better reliability

SD-WAN can detect and respond to network problems faster and more efficiently than a human operator. It does so by continuously monitoring network health, performance, and availability.

SD-WAN detects any disruption in the network, and it responds accordingly. For example, if one link goes down due to failure, SD-WAN immediately reroutes traffic through other available paths without manual intervention. This ensures your data always reaches its destination without any loss or delay.

SD-WAN use cases

An SD-WAN service helps enterprises maximize productivity by creating resilient WAN networks that enhance application performance in uncertain or unreliable circumstances. This is achieved through intelligent path selection based on dynamic criteria such as cost, latency, bandwidth, jitter, and packet loss. This results in a highly reliable network with minimal downtime for critical applications.

With that in mind, common SD-WAN use cases could include:

- Application performance optimization: An SD-WAN solution can help you optimize your application performance across your entire network so that no matter where your employees are located, they have fast access to all company resources.

- Visibility into network operations and traffic: SD-WAN provides administrators with a bird’s-eye view of the network so they can quickly pinpoint issues in the network and take immediate steps toward resolution.

- Centralized management and control: SD-WAN solutions typically offer centralized management and control, providing a unified view of the entire network, including both branch offices and cloud resources.

- Multi-cloud access: Connect branches and a hybrid workforce to multi-cloud applications easily with unified visibility and management.

- Improved WAN resiliency, availability, and capacity: This is achieved through intelligent path selection based on dynamic criteria such as cost, latency, bandwidth, jitter, and packet loss.

Is SD-WAN secure?

The short answer is, “yes, but.”

While SD-WAN offers many productivity-related benefits, including optimized performance, network reliability, flexibility, and cost reduction, if not implemented properly it may actually expose you to greater security risks.

When using SD-WAN, traffic flows directly from branch locations to the public internet, which means the traffic bypasses traditional security measures. This could leave the network vulnerable to external threats.

Nonetheless, SD-WAN can be secure, but the level of security depends on various factors, including the specific implementation, configuration, and security measures put in place. Many organizations even consider SD-WAN to enhance their network security as it provides several security features and benefits such as encryption, firewalls, segmentation, and centralized security management.

SD-WAN deployment types

Enterprises can choose to deploy SD-WAN using one of three available models: managed, DIY, or hybrid.

Managed

In this model, companies outsource all their SD-WAN needs to a managed service provider (MSP). The service provider is responsible for configuring, monitoring, and maintaining the SD-WAN network on behalf of the enterprise.

This model offers convenience and reduces the burden on IT staff, allowing them to focus on other priorities. However, it may limit the level of control and customization available to the enterprise.

Do-it-yourself (DIY)

The DIY model gives enterprises complete ownership of deploying and managing their SD-WAN solution.

An organization acquires the necessary SD-WAN resources directly from a vendor and maintains the network in-house. The in-house IT team is responsible for maintaining the company’s own SD-WAN equipment, connections and software.

A DIY approach provides the highest level of control and customization but requires significant expertise and resources from the enterprise. Large enterprises looking for full network controls may find this deployment model appealing, but it may be out of reach for most small businesses.

Hybrid

The hybrid deployment model combines elements of both DIY and managed approaches. The enterprise retains some control over some aspects of the SD-WAN implementation while leveraging the expertise and support of an MSP. The service provider may handle certain parts of the deployment and management while the enterprise controls specific functions or policies.

Top 3 SD-WAN vendors

Though the SD-WAN providers offer extensive functionalities and security capabilities, they may not be the best for every business. If the three providers below do not meet your needs, we reviewed the best SD-WAN vendors to help you determine the best solution for your company.

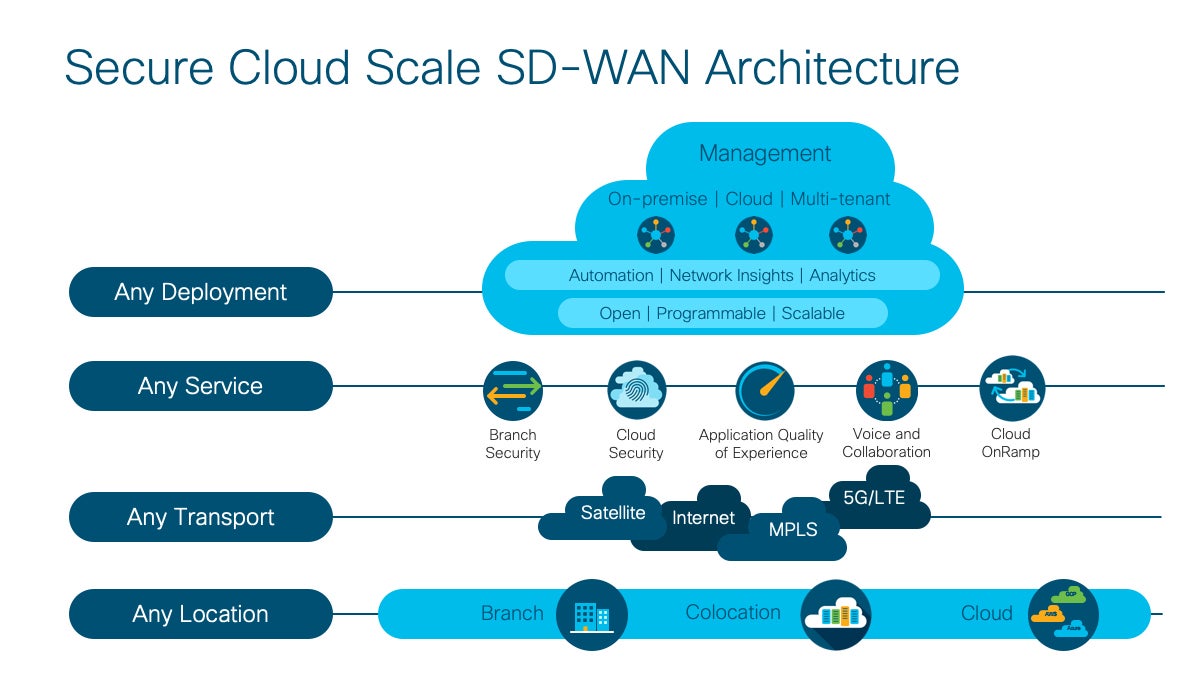

Cisco

Cisco offers a cloud-based SD-WAN overlay fabric that allows enterprises to connect data centers, branches, campuses, and colocation facilities to improve network performance. Managed through the Cisco vManage console, the solution separates data and control planes to provide centralized management and control.

Cisco SD-WAN key features include:

- Advanced multi-cloud and SaaS, analytics, and visibility.

- Web content filtering.

- Advanced SD-WAN Layer 2 and Layer 3 routing — general.

- SD-WAN Layer 2 and Layer 3 Multicast routing — IPv4.

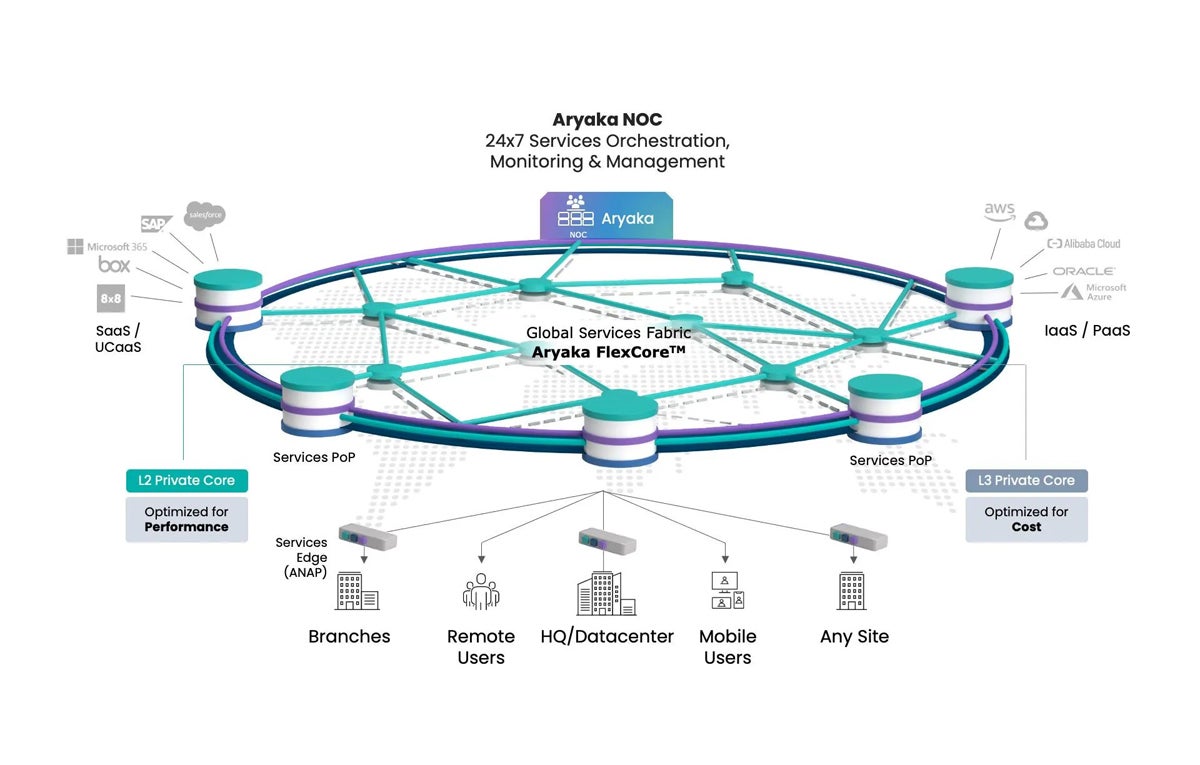

Aryaka

Aryaka is a managed SD-WAN service provider. Their SD-WAN service is built on a high-performance global FlexCore network, giving organizations a robust and flexible Network-as-a-Service to connect sites, users, and cloud workloads, regardless of location.

Aryaka SD-WAN key capabilities include:

- Availability with up to 99.999% uptime.

- White-glove and co-management options.

- MPLS interworking and hybrid WAN.

Juniper Networks

Juniper Networks SD-WAN leverages AI and the Juniper Mist Cloud Architecture to provide an intelligent and automated SD-WAN solution. Their solution integrates with Juniper’s Mist AI-driven networking platform to provide end-to-end visibility and control. Juniper’s SD-WAN solution offers key features such as zero-touch provisioning (ZTP), centralized management, and advanced analytics for monitoring and troubleshooting.

Juniper Networks key features include:

- Fast deployment with automated templating tools and ZTP.

- Branch-office communications with cloud-managed routing, switching, Wi-Fi, and security.

- Delivers AI-based insights and automates troubleshooting.

Bottom line: SD-WAN improves network performance with proper planning

SD-WAN is beneficial to organizations looking to improve their network performance and reduce costs. Businesses that are considering implementing this technology should carefully evaluate their specific needs and consider SD-WAN challenges.

Like any technology, while SD-WAN can provide organizational advantages such as increased bandwidth, improved network security, and centralized management, it also requires proper planning, deployment, and monitoring to succeed.

If you’re considering implementing SD-WAN, make sure you check out our complete guide to the best SD-WAN providers — and how to choose between them.